An epidemiology-inspired model for false information mitigation in social networks

Recent Ph.D. grad Bhavtosh Rath’s final dissertation focused on the very relevant issue of ‘fake news’ spreading

Social networks like Facebook, Twitter, and WhatsApp are used by millions of people around the world to share information and personal opinions. While these platforms are a great way for people to stay connected, they have also unfortunately led to the spreading of unverified, false information, popularly known as ‘fake news’.

Computational models for the detection and prevention of false information spreading have gained attention over the last decade. There has been a significant focus to determine the veracity of the information and content analysis of ‘fake news’. To extend this research, recent Ph.D. graduate Bhavtosh Rath proposed a complementary model, inspired by the domain of epidemiology.

“The fact that the outcome of the 2016 presidential election was shaped by polarizing social media campaigns (that mostly relied on unverified claims), as well as ‘fake news’ being acknowledged as a major societal problem inspired my research on fake news mitigation,” shared Rath.

“Professor Jaideep Srivastava had a proven track record of doing research on user modeling on social networks using behavioral data, so the decision to work under his advisorship for my Ph.D. was an obvious one," he continued.

Thesis details

Rath’s research interests lie at the intersection of machine learning and social network analysis, including how to mine actionable insights from social networks. For his doctoral dissertation, he developed a framework to identify the people who are most likely to become fake news spreaders. He used an epidemiology-inspired framework where the false information is analogous to disease, the people and communities on the social networks are analogous to the population, and how likely people are to believe an information endorser is analogous to their vulnerability to disease.

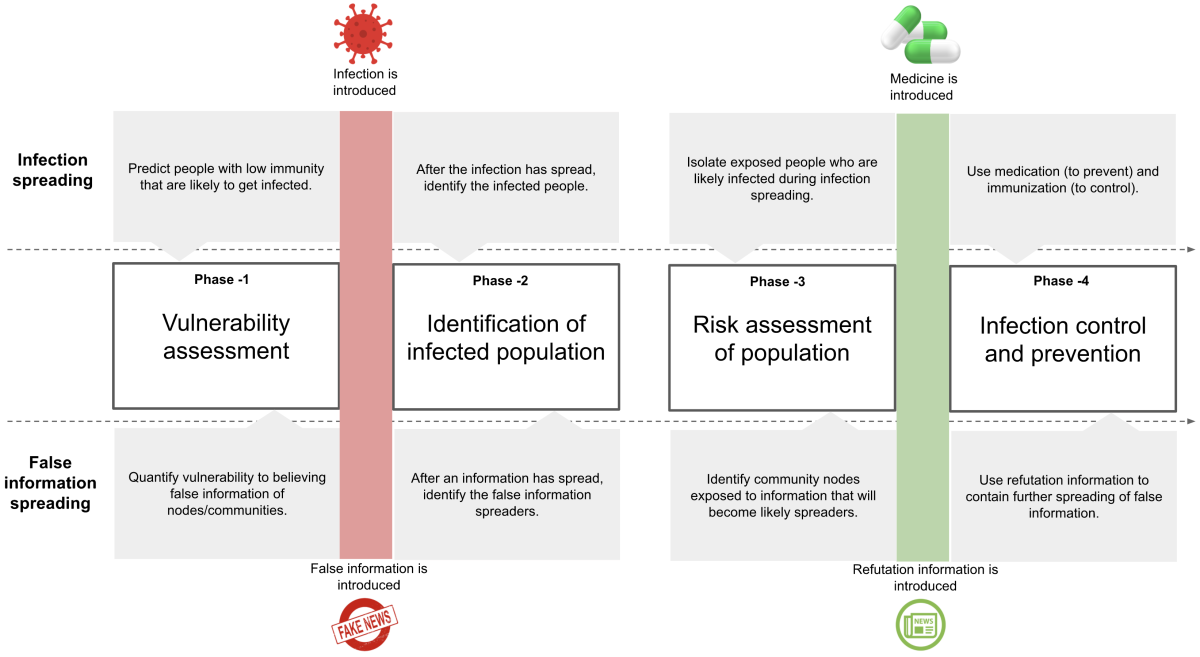

Rath proposed four sequential phases of social network analysis:

- In the first phase, called the vulnerability assessment, he estimates how likely the online community is to believe false information before ‘fake news’ starts spreading. This is equivalent to assessing the vulnerability (or immunity) of people before infection spreading begins.

- Once the infection gets introduced into the system, phase two and phase three begin. First, the likely spreaders of misinformation need to be identified. After that is the risk assessment of the population, where the goal is to identify specific nodes within the social media platform which are more likely to spread the information. This is the equivalent of contact tracing during a pandemic, where the exposed population needs to be quarantined to prevent the spreading of the infection (or the misinformation).

- The final phase is infection control and prevention, where Rath identifies people as false information spreaders, refutation information spreaders, or nonspreaders. This can aid in strategies to target people with refutation information to either change the role of a false information spreader into a true information spreader (i.e. using refutation information as an antidote), or prevent a person from becoming a false information spreader (i.e. using refutation information as a vaccine).

Rath applied his framework in the real world by conducting experiments using actual information from Twitter. With the help of his advisor, Professor Jaideep Srivastava, he was able to show the effectiveness of the proposed model and confirm that the spreading of false information is more sensitive to behavioral properties like trust and credibility than spreading of true information. These encouraging results should serve as motivation for further research, and could also be applied to track other applications, like cyberbullying, abuse detection, or viral marketing.

Next steps

Bhavtosh Rath successfully completed his doctorate in December of 2020. During his graduate studies, he published four conference papers and two journal papers on his core thesis, as well as two additional journal articles in collaboration with the Hubbard School of Journalism and Mass Communication.

As of February 2021, he is working at Target Corporation as a Senior AI Scientist on the AI Sciences Personalization & Search team.

“While working on fake news mitigation research, I spent a lot of time gathering technical knowledge and learning how to formulate research problems. At Target, I have been able to apply both these skills to successfully build models for personalized recommendations for customers,” said Rath. “Being able to work on applications that impact people’s regular lives has been a major motivation and continues to drive me.”